Chapter 2. The changes ahead

Let’s look at the dynamics in motion. Individually, these shifts might seem manageable. Collectively, they turn forward-looking thinking from a strategic luxury into a survival necessity.

1. The Bubble Ego

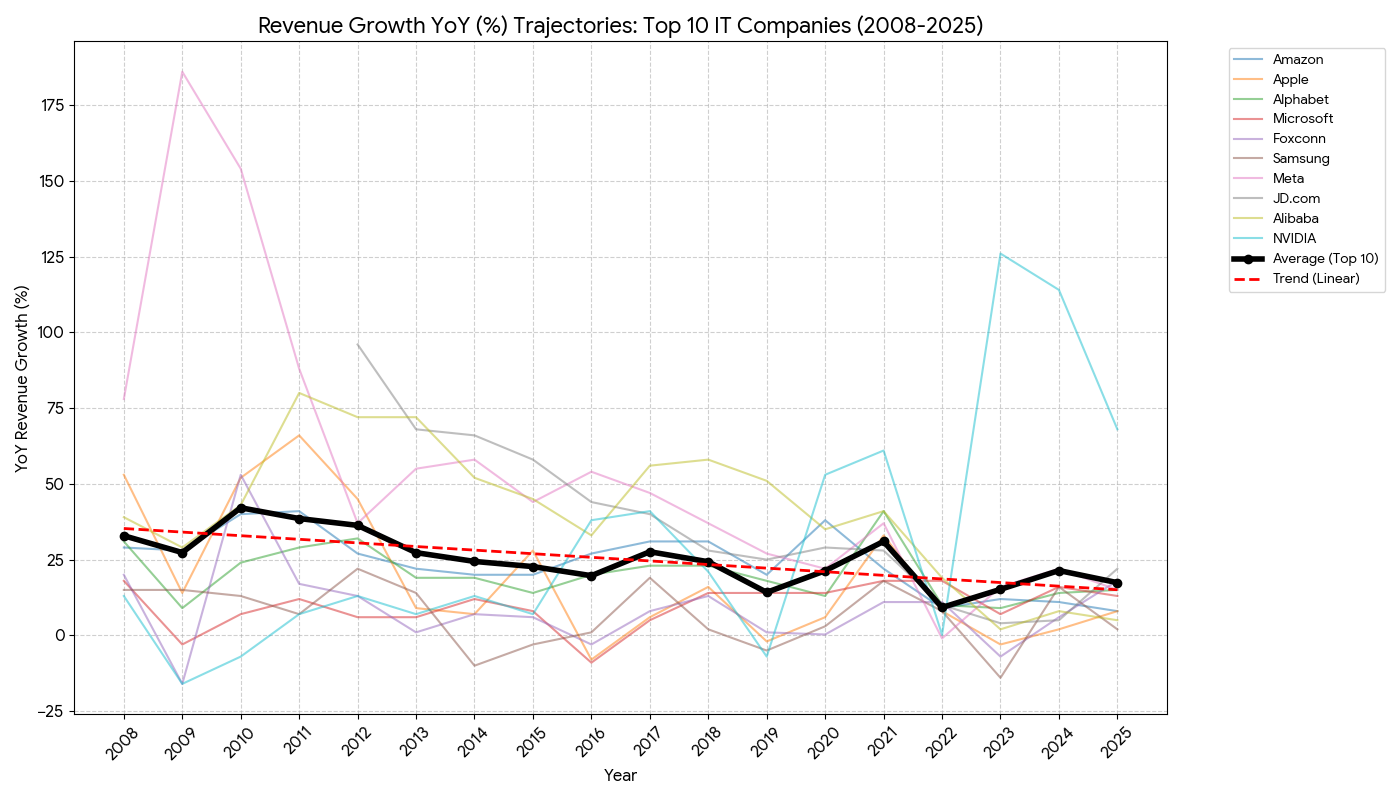

When we look at revenue growth for leading IT companies over the last 15 years, the trend is undeniable: the market is slowing down. This deceleration becomes even more glaring if we temporarily exclude exceptional outliers like Nvidia, which is currently riding the crest of the AI hype cycle.

Revenue—real money—remains a far better proxy for structural reality than company valuation. What makes this slowdown so damning is that it persists despite a decade of powerful tailwinds: the “Digital Transformation” euphoria of the 2010s, the extraordinary profitability boom during the COVID-19 pandemic, and the recent hysteria around artificial intelligence. None of these forces has been sufficient to reverse the long-term decline in growth rates.

Even major analysts are looking elsewhere for the next big thing[1], treating digital merely as an enabler rather than the main event.

Here lies the paradox of success. This outcome is precisely what the champions of Digital Transformation promised: IT as an abundant, universal good—routine, on-demand, and infrastructure-like. But be careful what you wish for. Once IT truly becomes infrastructure, the halo of being “the frontier of humanity” inevitably shifts elsewhere.

Sooner or later, this forces top executives to confront a question they have successfully dodged for decades: how do we remain the denominators of the future? Do we still command the leverage, growth potential, and narrative power we once took for granted?[2]?

We must admit a structural dependency that was previously denied: the IT sector is now tethered to overall economic growth. As the global economy stagnates, IT providers—who now serve everyone else—cannot escape the gravitational pull.

- McKinsey’s 2025 analysis highlights the "next big arenas" toward 2040: electrification, bio-frontiers, and hard tech (drones, satellites). Crucially, while these arenas are enabled by digital tech, the core IT layer itself is becoming commoditized. ↩︎

- Some believe they hold the "AGI joker," but this ignores a likely alternative: the replacement of human-led software factories with cloud-based "Intelligence Engines" (as Microsoft calls them). If software becomes a utility, the "visionary" tech CEO becomes a utility manager. ↩︎

2. The Infant Behemoth

The modern IT industry is young, but biology eventually catches up to everyone. Organizational maturity isn't a choice; it's a diagnosis. For some, it arrived in 2023; for others, it waits in 2029. But all living organizations age.

At some point, founders and early leaders must transfer control. The original visionary impulse must be replaced by something durable. Belief gives way to plans. Founder intuition is substituted with shared foresight.

If this substitution doesn't happen deliberately, the organization drifts into a state of random search: competing opportunistically for resources, chasing narratives, and grabbing at adjacent markets without a coherent direction. Over time, such systems lose more opportunities than they capture—until momentum stalls entirely.

A parallel shift is hitting the workforce. The IT industry is graying rapidly[1]. Senior professionals are staying put, while fewer juniors are entering the market. While this undermines the classic apprenticeship model, it mirrors a pattern long familiar in "boring" mature industries like Oil & Gas, FMCG, or logistics—sectors where, ironically, Strategic Foresight has historically been more common than in tech.

Soon, "Founded in 2002" will sound old. Simultaneously, the number of genuinely new companies is collapsing,[2] even if headline investment figures are temporarily inflated by AI speculation.

A particularly dangerous aspect of this transition is emerging in leading AI labs like OpenAI, Meta, or xAI. These organizations are seeing unusually high turnover among key personnel. It is increasingly unclear where the “carrier of the future” resides: within the hiring organization, or in the individuals themselves?

When a single researcher can raise capital independently before releasing a product—a feat unthinkable in previous eras—the company ceases to be the temple. It becomes merely a layover.

- Fortune reports that in Silicon Valley, "Gen Z staff was cut in half as the average age went up by 5 years." We should expect these stats to worsen as AI coding agents replace junior roles. ↩︎

- According to Crunchbase data, from 2020 to 2023, new startup formation in the U.S. was on pace to decrease by 86%. Israel and the EU saw similar declines (89% and 87%). While AI investment caused a blip, the shutdown rates grew just as fast. ↩︎

3. The "Not My Problem" Defense

As an organization’s footprint expands, its license to "just build" expires. Once critical infrastructure depends on your code, reliability, security, and governance stop being optional costs and start being survival metrics. You are obligated to prevent the next CrowdStrike outage or Equifax leak, not just apologize for it.

At the same time, Big Tech can no longer pretend to be a neutral pipe. Platform services—social media, streaming, gaming, and AI—don't just host public opinion; they engineer it. You cannot compete with governments for influence while simultaneously pleading ignorance of societal outcomes. That defense is dead.

The constraints are tightening everywhere. Hyperscale data centers cannot drain national power grids without giving something back. Extreme wealth concentration attracts regulatory predators—especially when the world’s richest people are all tech titans. And when software disrupts physical industries, it isn't just "efficiency"; it's a political issue regarding labor markets and regional stability.

You can try to postpone these tensions. You can point to the "AI Arms Race" with China as an excuse to bypass regulation. But despite the patriotic narratives, the trend is unmistakable: the regulatory noose is tightening.

Over the last decade, this pressure has materialized on three fronts:

- Taxation: Authorities are tired of the "Double Irish" and other accounting gymnastics. They want taxes paid where value is generated, especially within the EU’s aggressive framework.

- Antitrust: Agencies are blocking mergers, revisiting past deals, and handing out massive fines.[1] The era of unchecked acquisition is over.

- Social Harm: Ombudsmen, courts, and parents are invoking child protection and mental health to limit access to social networks, games, and AI.

In some nations, IT firms are now expected to digitize lagging sectors as a public duty. In others, tech holdings are fleeing the digital realm entirely, diversifying into "real" sectors like energy, healthcare, or logistics to secure tangible assets.

The conclusion is unavoidable: the IT industry is too big to hide. The era where external context was just background noise is over. With the emergence of AI, we are drafting a new social contract—one that defines not just what technology can do, but what it is allowed to do.

- In response, Big Tech has pivoted to acqui-hiring—poaching key teams like Windsurf or Inflection AI founders while licensing the tech, effectively swallowing the company without technically buying it. ↩︎

4. The Gartner Curse

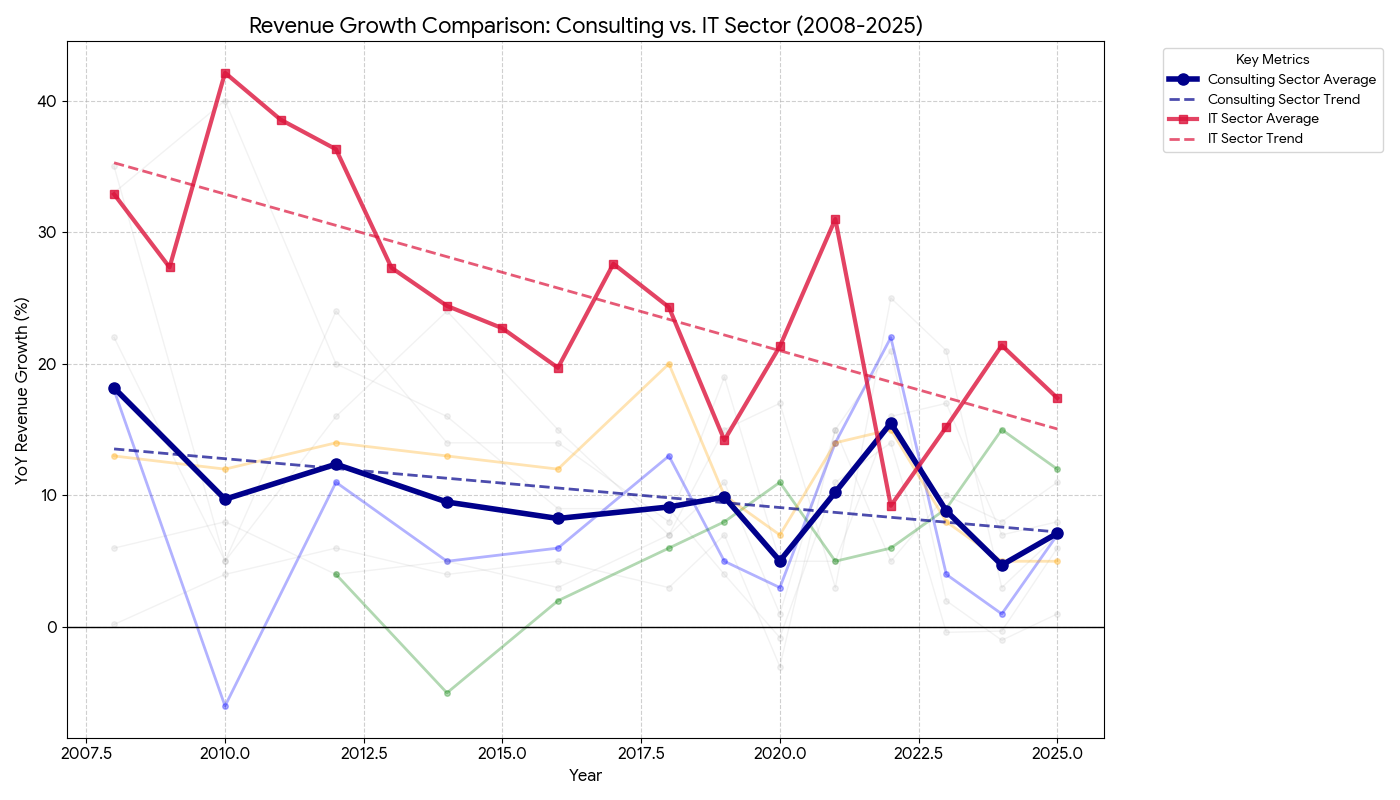

The revenues of the great "explainers"—research, analytics, and consulting giants—are following the same trajectory as the IT industry they monitor: growth is slowing. This deceleration wasn't fixed by the pandemic boom or the AI hype.

The institutions that historically sold the future are now struggling to survive the present.

Their relevance is eroding from the outside in. Watch the marketing of a Gartner-like organization; it’s painful. They are trying to sell authority to a generation raised on YouTube, X, and Discord using the tools of the 1990s. Their prestige was built in a world of information scarcity, gated reports, and three-martini lunches—conditions that no longer exist.

For a modern tech leader, the value proposition is collapsing. Why pay $50,000 for a subscription when:

- You can get a "mega-report" on future trends for free from agile groups like the Future Today Strategy Group (FTSG)?

- The expensive reports often miss the mark just as badly as the free ones?[1]

- Most disruptively, you can ask ChatGPT to compile a tailored industry analysis in 30 seconds for $20/month?

This does not mean Gartner is dead. That would be naive. Their influence runs deep in procurement departments and boardrooms where "Nobody gets fired for hiring IBM" is still a maxim. They validate vendor legitimacy and shape enterprise narratives.

But the "curse"—the monopoly on sense-making—is breaking.

The erosion is happening through four parallel forces:

- Trust Decay: The giants have missed too many shifts (see: Metaverse, Blockchain, initial AI wave), weakening their prophetic status.[2]

- Niche Competitors: Agile, compact foresight teams (like FTSG) are emerging, powered by modern media chops and AI tools.

- Democratized Futures: Institutions like the Institute for the Future are scaling impact through online communities rather than gatekept consulting.

- Internalization: Big Tech is building its own internal "Gartners." Why buy data when you own the platform that generates it?

It is too early to write the obituary for the consulting giants. But as they struggle to justify their fees and speed, the market is voting with its wallet. The incentive for IT firms is shifting: don't outsource your brain. Internalize strategic research and foresight.

- In 2022, McKinsey claimed the Metaverse would generate $5T in value by 2030, calling it "too big to ignore." Three years later, Mark Zuckerberg is quietly defunding the VR lab. The "experts" were just echoing the hype. ↩︎

- In 2025, Reuters compared global consultants to Kodak. As AI makes data analysis cheap, clients question why they are paying millions for slides that an AI agent could generate for pennies. ↩︎

5. Total trend-"washing"

Trend-washing: The act of mistaking strategic awareness for genuine insight. It’s trend-watching minus the thinking.

A harsh reality inside many IT companies is that the so-called "strategic function" is essentially a hostage. It is often embedded within Finance or Operations, where the only strategic question that matters is: How do we hit our revenue targets this quarter?

This is a necessary question, but it isn’t strategy; it’s accounting with a forward bias. For the medium and long term, these teams rely on external forecasts produced by organizations that claim to track global dynamics. Companies assume these forecasts reflect reality. In practice, they are often hallucinations.[1]

The Metaverse example I mentioned earlier is not the only one. The same McKinsey predicted in 2021 almost trillion-dollar collapse of the office workspaces, almost $4T marker for generative AI in 2023, around $11T market for employees well-being and healthcare (probably right before a vast majority of companies cut their DEI programs).

Even when wrapped in careful language—potential, beliefs, scenarios—these projections are too unstable for long-term commitments. Building a strategy on them is the corporate equivalent of a "Hail Mary" pass: pray for a miracle and move on when it lands out of bounds.

Another common configuration places "strategy" under Marketing. Here, it quickly devolves into a sales narrative, constrained by annual or even monthly planning cycles. Time collapses. Contextual moves dominate. Instead of tracking structural trends, organizations end up chasing short-lived signals: social media waves, viral narratives, and fashionable buzzwords.

The third variant is the Product Strategy. This is usually a Frankenstein’s monster assembled from feature roadmaps, architectural constraints, and user research. Nearby sits the "Technology Strategy," which usually boils down to: allocating 20% of resources to fix the technical debt we created last year.

All these approaches are internally coherent. None of them are forward-looking.

Increasingly, leaders sense this gap. They realize they are missing longitudinal thinking—a view that extends beyond the next sprint or the next fiscal year. This anxiety explains the sudden explosion of technology-focused executive education programs.[2]

The list of genuinely long-term challenges is growing, and none of them can be solved in a two-week sprint:

- Combating state-sponsored cybercrime and espionage.

- Delivering on ESG commitments under tightening regulatory scrutiny.

- Adapting to massive workforce shifts and migration policies.

- Managing fragile dependencies across geopolitical supply chains.

These challenges require incremental, multi-year institutional learning. For established IT firms—those with deep supply chains and regulatory exposure—trend-watching is no longer sufficient. Strategy must evolve from reacting to fashionable narratives to a systematic engagement with uncertainty.

- The Metaverse example is not unique. McKinsey predicted in 2021 that office work would be a trillion-dollar collapse (it didn't), in 2023 that Generative AI's added impact would be a $4.4T (as of 2026, economists from EY suggests AI investments will boost global GDP by only 0.5-1.0% by 2033), and in 2025 that the employee well-being market would hit $11T (right before everyone cut their DEI budgets). ↩︎

- Business schools like MIT Sloan, Wharton, and INSEAD have rushed to create EMBA tracks for tech leaders, emphasizing systems thinking and complexity over standard management theory. ↩︎

6. The Inadequacy of Classic Foresight

If you search job boards for "Strategic Foresight" roles in Big Tech, you will find a rounding error. They exist, but barely. This reflects a brutal correlation: no perceived demand, no sustained supply.

Even where these roles exist, they are often compensated as support staff. In the U.S., a Senior Strategic Analyst often earns significantly less than a Senior Software Developer. This isn't just an HR artifact; it’s a value statement. It says: Strategy supports execution. The code is the product; the thinking is just the wrapper.

But the problem runs deeper than pay bands.

At its core, the IT sector lacks a commonly shared model of itself. We have no stable framework to describe the mechanics of socio-technical ecosystems. We are still arguing over whether a 30% App Store fee is a tax, a rent, or a service charge. We are still debating if Moore’s Law is dead or just resting.

Without shared definitions, the industry is a black box. In traditional PESTEL analysis, the entire IT universe is often collapsed into a single letter: "T".

In these conditions, applied foresight struggles to gain traction. Without internal structure, foresight becomes descriptive rather than explanatory.

There is, however, a silver lining:

- External Drivers: Foresight can still treat technology as an input for other industries (retail, logistics), which works well enough even if the "black box" remains closed.

- Migration of Talent: Mature, non-IT organizations (Toyota, PepsiCo, the UN) have robust foresight functions. Over time, these practitioners will migrate into Tech, bringing their methods with them.

- Government Definition: Governments love categories. As they regulate talent, exports, and AI, they will define the IT sector for us—whether we like their definitions or not.

- Fear of Speed: The panic over AI progress creates a market for "plausible scenarios." There is now a demand for someone to replace abstract dystopian fear with structured exploration.

- Proto-Foresight: The industry has quietly accumulated tools that look like foresight. OKRs (objective-setting), CMMI (maturity models), and Inclusive Design all contain embedded assumptions about the future. These are the seeds we will return to later.

7. It Was All Too Easy

For decades, IT grew faster than anything else, transforming everything it touched. FinTech, HealthTech, GovTech—the "Tech" suffix was a badge of conquest.

Throughout this period, the sector played a double game. When convenient, it was a neutral provider ("We are just the tool"). When profitable, it was the driver of civilization ("We are the future"). This ambiguity allowed it to bypass the constraints that bind normal industries.

That era is ending.

Not tomorrow. Not suddenly. Not catastrophically. Not completely. But ending nonetheless.

Not suddenly, and not catastrophically. But it is ending. The primary reason is scale. The cumulative actions of ten million developers are no longer invisible; they are geologically significant.

- Infrastructure at Civilizational Scale. IT is no longer just code; it is heavy industry. Subsea cables, satellite constellations, and hyperscale data centers require rare earth materials, massive manufacturing, and political protection. When Sam Altman talks about a trillion-dollar AI industry, he isn't talking about software; he's talking about concrete and steel.

- Environmental Reality Checks. You cannot claim to be "lightweight" when your electricity bill rivals that of a medium-sized country. Carbon neutrality commitments are colliding head-on with the energy demands of AI compute.[1] Marketing narratives can no longer mask physical limits.

- The Talent Cliff. For years, Tech relied on a "hunter-gatherer" model for talent—poaching the best and ignoring the rest. The industry has done remarkably little to expand the long-term supply of skilled labor, preferring to lobby for migration quotas rather than build universities. That bill is coming due.

- From Platforms to Sovereigns. Global IT vendors have crossed the line from participants to de facto governments. They levy taxes (app store fees), police speech (content moderation), and control trade (API access). No nation-state enjoys sharing power with a corporation. With AI, these frictions will explode.

At this scale, you can no longer improvise. A herd of elephants cannot sprint like a cheetah; it moves deliberately, constrained by the terrain.

Crucially, this is not just a problem for the giants. Regulation cascades downward. Ask any European SME about GDPR or AI compliance. What starts at Meta ends up in your backlog.

When growth was easy, intuition was enough. When impact was diffuse, responsibility was optional. None of those conditions hold anymore.

- Mark Zuckerberg is planning a data center the size of Manhattan. That requires gigawatts of power, not just a few solar panels on the roof. ↩︎

The seven dynamics we’ve covered mark the end of the "move fast and break things" era. Economic normalization, organizational maturity, and physical scale now impose constraints that cannot be coded away.

Forward-looking thinking is no longer a luxury—but neither is it straightforward. The foresight methods that work for PepsiCo often fail when applied to Google, and internal strategy teams are too busy fighting fires to look at the horizon.

Before we dive into the profession of foresight, we need to look at how new practices actually emerge in IT.

Inclusive Design, which we will explore next, is the perfect case study. It is a practice that successfully took root in the industry, and it serves as a conceptual bridge to Futures Studies. If we want to know how to make foresight work in Tech, we should look at how Design paved the way.